Skip to main content

Scottish Area SQL Server User Group

Verbesserte barrierefreie Nutzung für Microsoft Office

Microsoft und sein Partner GW Micro Inc. bieten ab sofort blinden oder sehbehinderten Menschen eine verbesserte Nutzbarkeit von Microsoft Office. Kunden mit einer lizensierten Version von Microsoft Office 2010 oder 2013 können eine kostenlose Version von Window-Eyes, dem weit verbreiteten und vielbeachteten Screen Reader von GW Micro herunterladen.

„Zugang und Teilhabe" ist neben Datenschutz und Wahrung der Privatsphäre, Sicherheit und Transparenz eine wichtige Säule unseres Verständnisses von Corporate Technical Responsibility, unserer technologischen Verantwortung für alle unsere Produkte, Dienste und sonstigen Angebote und durch die Partnerschaft mit GW Micro können wir nun in diesem Verantwortungsbereich weitere, deutliche Verbesserungen im barrierefreien Umgang mit der Office Suite einschließlich Word, Excel, PowerPoint, OneNote und Outlook erreichen.

Weitere Informationen und die Möglichkeit zum Download von Window-Eyes finden sich unter www.windoweyesforoffice.com und einen umfassenderen Blick auf unsere Selbstverpflichtung, Instrumente und Initiativen für bessere Barrierefreiheit bietet unsere Webseite www.microsoft.com/enable.

Thomas Langkabel

National Technology Officer

Von Berühren zu Fühlen – Ausblick auf eine zukünftige Interaktion zwischen Mensch und Computer

Auch wenn Touchscreens in den letzten Jahren bereits unseren Umgang mit Computern dramatisch revolutioniert haben und eine Vielzahl von modernen Endgeräten – vom Smartphone über das Tablet bis zum Microsoft Surface – undenkbar wären, so nutzen wir doch weiterhin hierbei unsere Sinne zur Interaktion nur eingeschränkt. Wir sehen, was auf dem Bildschirm passiert und wir hören, was aus dem Lautsprecher kommt. Aber wenn wir den Bildschirm mit dem Finger berühren, reduziert sich unsere Wahrnehmung auf ein Minimum. Wir spüren eine kalte, glatte Oberfläche, egal, was auf dem Bildschirm gezeigt wird. Die bislang einzige Rückmeldung unserer Fingerspitze ist „ich habe Kontakt mit einer Oberfläche". So wird es nicht bleiben.

Meine Kollegen von Microsoft Research erforschen verschiedene Möglichkeiten, die Berührungs-Interaktion zwischen einem menschlichen Finger und einer Computer-Oberfläche aussagekräftiger zu machen. Mit dem aufkommenden Internet der Dinge, ganz neuen Materialien, weiter fortschreitender Miniaturisierung und drahtloser Energieversorgung kann in Zukunft nahezu jede Oberfläche – von der Tischplatte über die Schranktür bis zum Fenster –Möglichkeiten zum Zugang zur digitalen Welt bieten. Umso wichtiger ist es, diese Oberflächen nicht nur berührbar sondern fühlbarer zu machen.

Hong Tan von Microsoft Research Asien zeigt in diesem kurzen Clip mit deutschen Untertiteln, der im März in Redmond auf dem Microsoft TechFest 2014 gedreht wurde, aktuelle Forschungsansätze, um Oberflächen zum Leben zu erwecken und dem Anwender natürlichere Interaktionen zu ermöglichen.

[View:~/cfs-file.ashx/__key/communityserver-blogs-components-weblogfiles/00-00-00-86-16/Touch-Research_5F00_kl.mp4:0:0]

Ein ausführlicher, englischer Blog-Beitrag "Beyond Tapping and Sliding" zu Hong Tan und ihrer Forschung findet sich hier auf der Seite von Microsoft Research.

Weltweite Initiative „Microsoft CityNext" fördert Stadt der Zukunft

Im Rahmen der weltweiten Partnerkonferenz wurde die globale Städteinitiative „Microsoft CityNext" im Juli vorgestellt. Mit dieser weltweiten Initiative unterstützt Microsoft gemeinsam mit über 430.000 Partnern Städte dabei, mithilfe von IT sicherer, effizienter, grüner und lebenswerter zu werden.

Als deutsche Partnerstadt nimmt die Freie und Hansestadt Hamburg an der Initiative teil. Wir möchten gemeinsam mit unseren Partnern durch den direkten Austausch und die Zusammenarbeit mit unserer Partnerstadt Innovationen entwickeln, fördern und umsetzen. Ziel ist es ein langfristig und nachhaltig ausgerichtetes Aktionsprogramm zu entwerfen, das auf die speziellen Herausforderungen, Voraussetzungen und Prioritäten abgestimmt ist. Jörn Riedel, CIO der Freien und Hansestadt Hamburg, erklärt: „Städte sind nie fertig, sie müssen jeden Tag neu erschaffen werden. IT ist für uns ein Werkzeug zur Entwicklung einer neuartigen Verwaltung und einer neuen Form städtischen Zusammenlebens. Das eine bedingt dabei das andere: Die Stadtverwaltung spielt eine herausragende Rolle für wirtschaftliche Entwicklung und das Wohlergehen der Bürger. Die „City Next"-Initiative von Microsoft ist für uns eine Chance uns mit den digitalen Vorreiter-Städten auf der ganzen Welt auszutauschen und mit den innovativsten Technologien in Kontakt zu kommen".

Als Handlungsspektrum dienen die folgenden achtZielistes,fürjedeStadteinlangfristigundnachhaltigausgerichtetesAktionsprogrammzuentwerfen,dasaufihrespeziellenHerausforderungen,VoraussetzungenundPrioritätenabgestimmtist.AufdieseWeisesolleinProjektplanentstehen,derininsgesamtachtdefiniertenFunktionsbereichen(Stadtverwaltung,ÖffentlicheSicherheit&Justiz,Gesundheit&Soziales,Bildung,Energie&Wasserversorgung,Stadtplanung,Tourismus&KultursowieTransport&Verkehr)IT-LösungenaufBasisvonCloudComputing,BigDataTechnologiensowiemobilenAnwendungenundSocialEnterprisefürmehrEffizienzundgleichzeitigbesserenBürgerserviceaufzeigt.folgende definierten Funktionsbereiche: Stadtverwaltung, Öffentliche Sicherheit & Justiz, Gesundheit & Soziales, Bildung, Energie & Wasserversorgung, Stadtplanung, Tourismus & Kultur sowie Transport & Verkehr. Um das Potenzial der Innovation vollkommen auszuschöpfen, wird hierbei auch auf die neue Ära von Technologien und Konzepten gesetzt, wie Cloud Computing, Big Data, mobilen Anwendungen und Social Enterprise.

Städte sind längst überall auf vielen Modernisierungspfaden unterwegs, in unterschiedlichen Geschwindigkeiten und Richtungen. Sie stehen vor vielen Herausforderungen, um für die Zukunft gerüstet zu sein. Mit Microsoft CityNext möchten wir hierbei eine hilfreiche Stütze sein - jetzt und auch in Zukunft.

In Deutschland verdeutlicht Microsoft bereits seit der CeBIT 2012 mit dem Onlineprojekt „Neustadt – die digitale Stadt" die Vision einer modernen Stadt. Neustadt Digital ist wesentlicher Bestandteil der deutschen Umsetzung der weltweiten Microsoft CityNext Initiative und wird somit auch in Zukunft Orientierungshilfen für innovative kommunale Lösungen bieten.

Windows 8-App liefert einfachen Zugang zum Bundestag

Der Deutsche Bundestag erweitert sein mobiles Online-Angebot um

eine kostenlose App „Deutscher Bundestag" für das Betriebssystem Windows 8. Die

App des Deutschen Bundestages zielt darauf ab, mehr Transparenz bei der

politischen Entscheidungsfindung herzustellen. Der Zugriff auf diese

Informationen soll für alle Bürger zu jedem Zeitpunkt und auf allen gängigen

Plattformen ermöglicht werden.

Die App kombiniert tagesaktuelle Nachrichten mit Hintergrundberichten zu

Sitzungen und Debatten. Außerdem stehen allgemeine Besucherinformationen im

Mittelpunkt der mobilen Anwendung des Deutschen Bundestages. Hier können alle

Informationen zur Teilnahme an Plenarsitzungen auf der Besuchertribüne, zu

Vorträgen im Plenarsaal, Führungen durch die Gebäude mit Schwerpunkten auf

Politik, Geschichte, Kunst oder Architektur sowie zu Ausstellungen und

natürlich zum Besuch der Reichstagskuppel abgerufen werden – großformatige

Außen- und Innenansichten liefern hier einen Vorgeschmack auf einen Rundgang

durch das Parlament oder den Ausblick von der Kuppel.

In der ersten Version der App können alle Plenardebatten live verfolgt werden.

Redner und Tagesordnungspunkte sowie die aktuellen Hintergrundberichte werden

parallel zur Sitzung angezeigt. Live übertragen werden auch die öffentlichen

Ausschusssitzungen und Anhörungen. Wer eine Sitzung verpasst hat, kann auf die

Internet-Mediathek mit allen Aufzeichnungen der 17. Wahlperiode zugreifen.

Die App bietet zudem einen Überblick über alle in der jeweiligen Sitzungswoche

anstehenden Themen im Parlament sowie über die beschlossenen oder abgelehnten

Gesetzesvorhaben und die namentlichen Abstimmungen.

Die Windows 8-App des Deutschen Bundestages überzeugt dabei sowohl durch ihre

technische Umsetzung als auch durch Design und Usability. Entwickelt wurde die

App in einer Kooperation von der Babiel GmbH aus Düsseldorf/Berlin und den

Studenten René Rimbach, Kai Brummund, Rafael Regh, Malte Götz und Raphael

Köllner aus dem Förderprogramm Microsoft Student Partners. „Mit der Entwicklung

und Fortentwicklung der App ‚Deutscher Bundestag' wurden internationale

Maßstäbe für parlamentarische Informationsangebote gesetzt", so Dr. Rainer

Babiel, geschäftsführender Gesellschafter der Babiel GmbH.

Die App ist im MicrosoftStore erhältlich.

ZIVIT schließt strategischen Vertrag mit Microsoft ab

Das Zentrum für Informationsverarbeitung und Informationstechnik (ZIVIT) hat in seiner Funktion als offizielles Dienstleistungszentrum Informationstechnologie (DLZ IT) im Geschäftsbereich des Bundesministeriums der Finanzen (BMF) einen umfassenden Lizenzvertrag mit Microsoft abgeschlossen. Neben dem ZIVIT selber deckt der Vertrag auch den Bedarf für die Arbeitsplätze und Infrastrukturprojekte des Bundesamtes für zentrale Dienste (BMF) und offene Vermögensfragen (BADV) sowie der Zollverwaltung in der Bundesrepublik Deutschland ab.

Das Enterprise Agreement beinhaltet dabei weite Teile der Microsoft Client, Office und Serverprodukt- und lösungspalette und hat eine Laufzeit von drei Jahren.

„In der Bundesfinanzverwaltung ist diese Vereinbarung, neben der notwendigen lizenzrechtlichen Grundlage und Ausstattung für die zahlreichen Projekte der Modernisierung, Standardisierung und Konsolidierung des ressortinternen IT-Angebots vor allem auch ein wichtiger Schritt in der weiteren Umsetzung der DLZ IT Strategie gemeinsam mit dem BMF und dem ZIVIT", sagt Johannes Rosenboom, Vertriebsleiter Bundesverwaltung im Geschäftsbereich Öffentliche Auftraggeber bei der Microsoft Deutschland GmbH.

Caching SSRS Reports for Performance

I've been fairly quiet on the blogging-front recently, but I have been busy working with SQL Server Reporting Services (SSRS) ! I've also been fortunate enough to present a session to customers on SSRS scalability at a recent Business Intelligence Operations Day in Reading, UK. One thing that struck me from that day was that very few people are aware of the caching options available in Reporting Services and how they can be used to improve report processing performance. A quick show of hands showed that no-one in my session was making use of caching, and only one person required real-time reporting on their data.

When you create a report and deploy it to your report server, the default behaviour is to always run the report with the most recent data and not to implement any caching. Actually, SSRS does implement session level caching for each user request "under the covers" to improve report performance and to provide a consistent experience across a single browser session. Data remains consistent during the report session and is unaffected by changes in the underlying data source. This article provides a good discussion of report session caching.

Unless you need real-time data in your reports, then hitting the underlying data sources each time you run the report is unnecessary and can hurt performance. In most cases, the business requirement is to report on historical data (whether that is from the last 15 minutes, hour, day or week) so we can implement caching to improve the report processing times based on these requirements. If we have no caching, a new instance of the report is created for each user who opens or requests the report; each new instance contains the results of a new query. With this approach, if ten users open the report at the same time, ten queries are sent to the data source for processing. If the report server load is heavy, or if the reports take a significant time to retrieve data and process, then this can have a negative impact on the performance of the Report Server and the underlying databases executing the report queries.

We can implement 2 forms of caching in Reporting Services: temporary cached reports and report snapshots. A temporary cached instance of a report is based on the intermediate format of a report (report data merged with the layout information before any rendering is applied). The report server will cache one instance of a report based on the report name. However, if a report can contain different data based on query parameters, multiple versions of the report may be cached at any time. For example, suppose you have a parameterised report that takes a region code as a parameter value. If four different users specify four unique region codes, four cached copies will be created. The first user who runs the report with a unique region code creates a cached report that contains data for that region. Subsequent users who request a report using the same region code get the cached copy.

With this approach, if ten users open the report, only the first request results in report processing. The report is subsequently cached, and the remaining nine users view the cached report. Cached reports are removed from the cache at intervals that you define. You can specify intervals in minutes, or you can schedule a specific date and time to empty the cache. Not all reports can be cached. If a report prompts users for credentials or uses Windows Authentication, it cannot be cached unless you change the data source to use stored credentials instead. There are certain other events that will invalidate the cache and cause it to be flushed for temporary cached copies of a report. These include if the report definition is modified, report parameters are modified, data source credentials change, report execution options change or if the cache expiration timeout is reached.

A report snapshot is a report that contains layout information and data that is retrieved at a specific point in time. A report snapshot is usually created and refreshed on a schedule, allowing you to time exactly when report and data processing will occur. If a report is based on queries that take a long time to run, or on queries that use data from a data source that you prefer no one access during certain hours, you should run the report as a snapshot. A report snapshot is stored in the intermediary form in the ReportServer database, where it is subsequently retrieved when a user or subscription requests the report. When a report snapshot is updated, it is overwritten with a new instance. The report server does not save previous versions of a report snapshot unless you specifically set options to add it to report history.

Not all reports can be configured to run as a snapshot. For example, you cannot create a snapshot for a report that prompts users for credentials or uses Windows integrated security to get data for the report. Also, if you want to run a parameterised report as a snapshot, you must specify a default parameter to use when creating the snapshot. In contrast with reports that run on demand, it is not possible to specify a different parameter value for a report snapshot once the report is open.

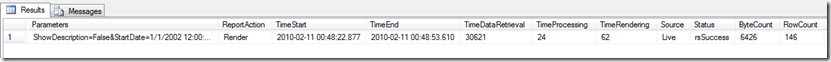

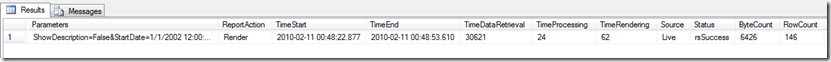

So how do we identify which reports to cache to improve performance? By default, Report Server saves all the details of reports that have executed in the Report Server database. This information can be accessed via the ExecutionLog2 view in SSRS 2008 or by this method in SSRS 2005. Robert Bruckner has written an excellent blog post detailing the information contained in the ExecutionLog2 view, which is highly recommended reading. Using this data, we can analyse where these reports are spending most time during report execution (data retrieval, report processing or rendering). There's a great article on the SQLCAT site on Reporting Services Performance Optimisations which can help you to address some of these issues. Long-running reports or frequently accessed reports are going to give us greater performance improvements, so these would be good candidates for caching. The figure below shows an extract of data after running the following statement on the Report Server database:

SELECT * FROM [ReportServer].[dbo].[ExecutionLog2]

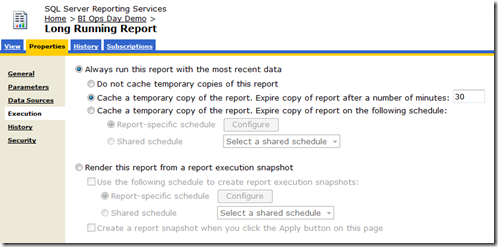

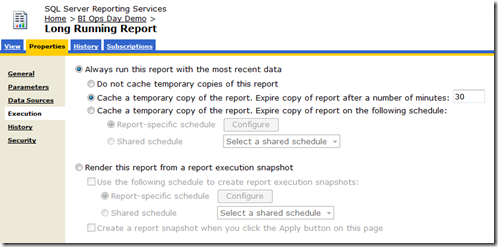

So we can see that this report is taking 30 seconds to retrieve the data from the underlying data source. We can specify that this report is cached and that the cache will expire every 30 minutes by adjusting the Report Execution properties as shown below:

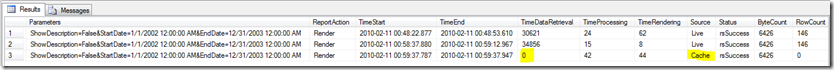

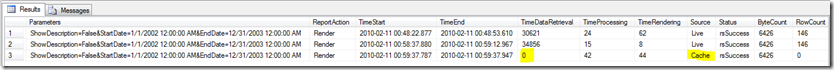

The next time someone requests the report, the data will be retrieved from the data source and then cached in the ReportServerTempDB. Any subsequent requests from any user for that report (with the same parameter values) will be rendered from the cached copy. The output from the ExecutionLog2 view will now be as follows:

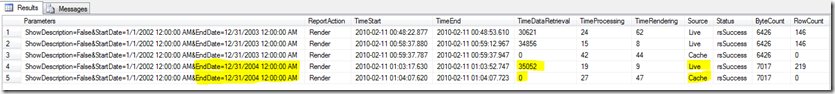

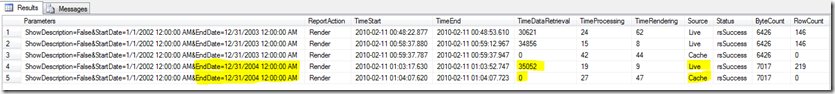

As we can see, the report is now rendered from cache and the data source not queried, resulting in a huge performance improvement. If we change the parameters of the report, then we will go back to the data source to retrieve the new data and a temporary cached copy of the report will be created for the new combination of parameters:

I worked recently with a customer who had set up caching for a report but they were using dynamic date functions in the values for their parameters. Every time the report was executed they had different values for the start and end date parameters, so they were constantly hitting the database with new queries even though they only required to report on the data from the previous evening. We were able to schedule a snapshot of the report to run each evening and then render the reports from that snapshot throughout the day… report execution time went from 20 minutes to a few milliseconds as a result ! The benefit of scheduling a snapshot is that the data is already pre-loaded in cache ready for users the next morning. We don't suffer the initial performance hit that the first user would experience (in order to refresh data) if we used a temporary cached report.

As you can see, utilising SSRS's caching mechanisms can provide a very powerful way to improve report processing performance and to lighten the load on your database servers !

CU #3 for SQL Server 2008 Released

On January 19, 2009 Microsoft shipped the Third Cumulative Update for SQL Server 2008 RTM.

This CU represents 36 Resolved Issues and 30 Unique Customer Requests. More details and download details can be found here:

https://support.microsoft.com/kb/960484/en-us

DBCC CHECKDB

DBCC CHECKDB checks the logical and physical integrity of all objects in the specified database by performing the following:

- Runs DBCC CHECKALLOC on the database

- Runs DBCC CHECKTABLE on every table and view in the database

- Runs DBCC CHECKCATALOG on the database

- Validates the contents of every indexed view in the database

- Validates the service broker data in the database

DBCC CHECKALLOC:

- Validates the allocation information maintained in the GAM, SGAM and IAM pages

- Performs cross check to verify that every extent that the GAM or SGAM indicates has been allocated really has been allocated, and that any extents not allocated are indicated in the GAM and SGAM as not allocated

- Verifies the IAM chain for each allocation unit, including the consistency on the links between the IAM pages in the chain

- Verifies that all extents marked as allocated to the allocation unit really are allocated

DBCC CHECKTABLE:

- Performs a comprehensive set of checks on the structure of a table, and by default these are logical and physical

- With physical-only option, you can exclude the logical checks and only validate the physical structure of the page and record headers

- PHYSICAL OPTION is a lightweight check of the physical consistency of the table and common hardware failures that can compromise data

- Indexed views are verified by regenerating the view's rowset from the underlying select statement definition and comparing the results with the data stored in the indexed view

- SQL performs 2 left anti-semi joins between the 2 rowsets

DBCC CHECKCATALOG:

- Performs more than 50 cross-checks between various metadata tables

- cannot fix errors it finds by running the DBCC operation with any of the REPAIR options

SERVICE BROKER Data is Verified:

- Only way to check the service broker data as there is no specific DBCC command to perform the checks

- DBCC CHECKFILEGROUP can also be considered to be a subset of CHECKDB because it performs DBCC CHECKTABLE on all tables and views in a specified filegroup

DBCC will run faster on a SQL 2005 database upgraded from 2000 but with no 2005 features or indexed views. On a new 2005 database, some of the logical checks added to compliment new features are necessarily complex and add to the runtime when invoked.

All the DBCC validation commands use database snapshots to keep validation operations from interfering with on-going database operations and to allow the validation operation to see a quiescent, consistent view of the the data. A snapshot is created at the beginning of the CHECK command, and no locks are acquired on any of the objects being checked. The actual check operation is performed against the snapshot. Unlike regular snapshots, the "snapshot file" that DBCC CHECKDB creates cannot be configured and is invisible to the end user. It always uses apace on the same volume as the database being checked. This capability is only available when your data directory is on an NTFS partition.

- You can avoid creating a snapshot (to save disk space for example) by using the WITH TABLOCK option with the DBCC command

- If you are using one of the repair options, a snapshot is not created as the database is in single-user mode, so no other transactions can be altering data

- Without TABLOCK, DBCC is considered to be an online operation

- DBCC validation checks require a significant amount of space as SQL Server needs to temporarily store data about pages and structures that have been observed during the check operation against pages and structures that are observed later during the DBCC scan

- To determine the space tempdb needs in advance, run CHECKDB with the ESTIMATE ONLY option:

- SET NOCOUNT ON;

DBCC CHECKDB ('AdventureWorks') WITH ESTIMATEONLY; - This is computed as a worst-case estimate and assumes there will be no room in memory for any of the sort operations required

VALIDATION CHECKS

SQL Server 2005 includes a set of logical validity checks to verify that data is appropriate for the columns data type. These can be expensive and affect server performance, so you can disable these, along with other non-core logical validations by using the PHYSICAL-ONLY option. All new databases in SQL Server 2005 have the DATA_PURITY logical validations enabled by default. For upgraded databases, you must run the DBCC CHECKDB with the DATA_PURITY option once, preferably immediately after the upgrade

DBCC CHECKDB ('dbname') WITH DATA_PURITY

After the purity check completes without any errors, performing the logical validations is the default behavior in all future executions of DBCC CHECKDB and there is no way to change this default. You can override the default by using the PHYSICAL_ONLY option. This skips the data purity check but also skips any checks that have to analyze the contents of individual rows of data and limits the checks that DBCC performs.

DBCC REPAIR OPTIONS

There are three DBCC repair options:

- REPAIR_ALLOW_DATA_LOSS

- REPAIR_REBUILD

- REPAIR_FAST (legacy backward compatibility only)

Almost all errors can be repaired except:

- DBCC CHECKCATALOG errors

- Data purity errors found through DBCC CHECKTABLE

When you run DBCC CHECKTABLE with one of the repair options:

- SQL runs DBCC CHECKALLOC and repairs what it can

- Then runs DBCC CHECKTABLE on all tables and makes the appropriate repairs on all the tables

- List of repairs are ranked to avoid duplication of effort (i.e. rebuilding an index, then removing a page from a table would duplicate effort)

REPAIR_ALLOW_DATA_LOSS option:

- SQL Server tries to repair almost ALL detected errors.

- For almost any severe error, some data will be lost when the repair is run.

- Rows may be deleted if they are found to be inconsistent, such as when a computed column value is incorrect.

- Whole pages can be deleted if checksum errors are discovered

- During the repair, no attempt is made to maintain any constraints on the tables, or between tables

- Some errors SQL Server won't even try to repair, particularly if the GAM or SGAM pages themselves are corrupt or unreadable.

REPAIR_REBUILD option:

- Minor, relatively fast repair actions such as repairing extra keys in a non-clustered index

- Time consuming repairs, such as rebuilding indexes

- can be performed without risk of data loss

- After successful completion, database is physically consistent and online but may not be in a logically consistent state in terms of constraints and business rules

Use repair options only as a last resort, restore the database from backup instead. If you are going to run repair_allow_data_loss, backup your database first. You can run the repair options for DBCC inside a user-defined transaction, which means you can perform a ROLLBACK to undo the repairs. Exception is when you are running the repair options on a database in EMERGENCY mode. If a repair in emergency mode fails, there are no other options except to restore the database from a backup.

Each individual repair in a DBCC operation runs in it's own system transaction, which means that if a repair is not possible, it will not affect any other repairs.

Create a snapshot before the repair is initiated, start a transaction, then run the REPAIR option. Before committing or rolling back, you can compare the repaired database with the original snapshot. If you are not satisfied with the changes made, rollback the repair operation.

PROGRESS REPORTING:

- Use sys.dm_exec_requests

- Command column indicates current DBCC command phase

- Percent_completed represents %age completion of the DBCC command phase

- Estimated_completion_time represents the time in ms of how long it will take to complete

DBCC BEST PRACTICES

Use CHECKDB with CHECKSUM option and a sound backup strategy to protect the integrity of the database from hardware failures

Perform DBCC CHECKDB WITH DATA_PURITY after upgrading a database to SQL Server 2005 to check for invalid data values

Make sure you have enough disk space available to accommodate the database snapshot

Make sure you have space available ion tempdb to allow the DBCC command to run. Use the ESTIMATEONLY command to find out how much space is required in tempdb

Use REPAIR_ALLOW_DATA_LOSS only as a last resort

Detecting SQL Server 2005 Blocking

Database queries should be able to execute concurrently without errors and within acceptable wait times. When they don't, and when the queries behave correctly when executed in isolation, you will need to investigate the causes of the blocking. Generally, blocking is caused when a SQL Server process is waiting for a resource that another process has yet to release. These waits are most often caused by requests for locks on user resources. A full list of SQL Server wait types can be found here.

Prior to SQL Server 2005, blocking could be detected using the sp_blocker_pss80 stored procedure, sp_who2, Perfmon and SQL Profiler. However, SQL Server 2005 has added some important new tools that adds to this toolkit. These tools include:

- Enhanced System Monitor counters (Perfmon)

- DMV's: sys.dm_os_wait_stats, sys.dm_os_waiting_tasks and sys.dm_tran_locks

- Blocked Process Report in SQL Trace

- SQLDiag Utility

In System Monitor, the Processes Blocked counter in the SQLServer:General Statistics object shows the number of blocked processes. The Lock Waits counter from the SQLServer:Wait Statistics object can be added to determine the the count and duration of the waiting that is occurring. The Processes blocked counter gives an idea of the scale of the problem, but only provides a summary , so further drill-down is required. DMV's such as sys.dm_os_waiting_tasks and sys.dm_tran_locks give accurate and detailed blocking information.

The sys.dm_os_waiting_tasks DMV returns a list of all waiting tasks, along with the blocking task if known. There are a number of advantages to using this DMV over the sp_who2 or the sysprocesses view for detecting blocking problems:

- The DMV only shows those processes that are waiting

- sys.dm_os_waiting_tasks returns information at the task level, which is more granular than the session level.

- Information about the blocker is also shown

- sys.dm_os_waiting_task returns the duration of the wait, enabling filtering to show only those waits that are long enough to cause concern

The sys.dm_os_waiting_task DMV returns all waiting tasks, some of which may be unrelated to blocking and be due to I/O or memory contention. To refine your focus to only lock-based blocking, join it to the sys.dm_tran_locks DMV.

The SQL Trace Blocked Process Report is another useful way to identify blocking. You can automatically trigger an event when a process has been blocked for more than a specified amount of time. You use the sp_configure command to set the advanced option blocked process threshold to a user defined value:

exec sp_configure 'show advanced options', 1;

reconfigure;

go

exec sp_configure 'blocked process threshold', 30;

reconfigure;

This sets the threshold to 30 seconds. You can then start a SQL Trace and select the Blocked process report event class in the Errors and Warnings group. This article explains the event class in more detail, however it is important to choose the TextData column in order to inspect the contents of the report. The event will fire when a blocked process is detected and the TextData column will return an XML-formatted set of data. Data for the blocked process is shown first, and then the blocking process.

The benefit of the Blocked Process Report is that you have the blocking events recorded on disk in a trace file, along with the time and duration of the blocking. the Threshold option can be adjusted to narrow down the information returned to narrow down the longest ones.

The SQLDiag utility has been enhanced and provides information about your current system. It can run as an executable from the command line or as a service. You can read the output directly, or download the free SQLNexus utility to get reports for waits and blocking. You can also use the Microsoft PSS PerfStats collection of scripts in combination with SQLDiag to get blocking information.

Enabling Kerberos Authentication for Reporting Services

Recently, I've helped several customers with Kerberos authentication problems with Reporting Services and Analysis Services, so I've decided to write this blog post and pull together some useful resources in one place (there are 2 whitepapers in particular that I found invaluable configuring Kerberos authentication, and these can be found in the references section at the bottom of this post). In most of these cases, the problem has manifested itself with the Login failed for User 'NT Authority\Anonymous' ("double-hop") error.

By default, Reporting Services uses Windows Integrated Authentication, which includes the Kerberos and NTLM protocols for network authentication. Additionally, Windows Integrated Authentication includes the negotiate security header, which prompts the client to select Kerberos or NTLM for authentication. The client can access reports which have the appropriate permissions by using Kerberos for authentication. Servers that use Kerberos authentication can impersonate those clients and use their security context to access network resources.

You can configure Reporting Services to use both Kerberos and NTLM authentication; however this may lead to a failure to authenticate. With negotiate, if Kerberos cannot be used, the authentication method will default to NTLM. When negotiate is enabled, the Kerberos protocol is always used except when:

- Clients/servers that are involved in the authentication process cannot use Kerberos.

- The client does not provide the information necessary to use Kerberos.

An in-depth discussion of Kerberos authentication is beyond the scope of this post, however when users execute reports that are configured to use Windows Integrated Authentication, their logon credentials are passed from the report server to the server hosting the data source. Delegation needs to be set on the report server and Service Principle Names (SPNs) set for the relevant services. When a user processes a report, the request must go through a Web server on its way to a database server for processing. Kerberos authentication enables the Web server to request a service ticket from the domain controller; impersonate the client when passing the request to the database server; and then restrict the request based on the user's permissions. Each time a server is required to pass the request to another server, the same process must be used.

Kerberos authentication is supported in both native and SharePoint integrated mode, but I'll focus on native mode for the purpose of this post (I'll explain configuring SharePoint integrated mode and Kerberos authentication in a future post). Configuring Kerberos avoids the authentication failures due to double-hop issues. These double-hop errors occur when a users windows domain credentials can't be passed to another server to complete the user's request. In the case of my customers, users were executing Reporting Services reports that were configured to query Analysis Services cubes on a separate machine using Windows Integrated security. The double-hop issue occurs as NTLM credentials are valid for only one network hop, subsequent hops result in anonymous authentication.

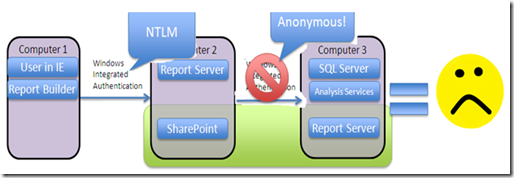

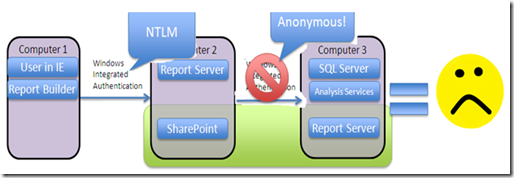

The client attempts to connect to the report server by making a request from a browser (or some other application), and the connection process begins with authentication. With NTLM authentication, client credentials are presented to Computer 2. However Computer 2 can't use the same credentials to access Computer 3 (so we get the Anonymous login error). To access Computer 3 it is necessary to configure the connection string with stored credentials, which is what a number of customers I have worked with have done to workaround the double-hop authentication error.

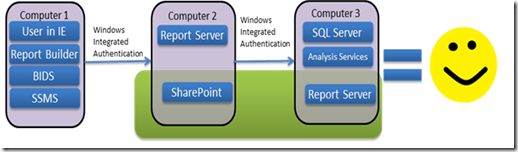

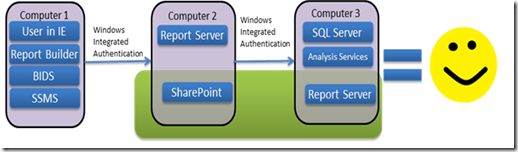

However, to get the benefits of Windows Integrated security, a better solution is to enable Kerberos authentication. Again, the connection process begins with authentication. With Kerberos authentication, the client and the server must demonstrate to one another that they are genuine, at which point authentication is successful and a secure client/server session is established.

In the illustration above, the tiers represent the following:

- Client tier (computer 1): The client computer from which an application makes a request.

- Middle tier (computer 2): The Web server or farm where the client's request is directed. Both the SharePoint and Reporting Services server(s) comprise the middle tier (but we're only concentrating on native deployments just now).

- Back end tier (computer 3): The Database/Analysis Services server/Cluster where the requested data is stored.

In order to enable Kerberos authentication for Reporting Services it's necessary to configure the relevant SPNs, configure trust for delegation for server accounts, configure Kerberos with full delegation and configure the authentication types for Reporting Services. These steps are outlined in greater detail in the "Manage Kerberos Authentication Issues in a Reporting Services Environment" whitepaper in the resources section at the end of this article.

Service Principle Names (SPNs) are unique identifiers for services and identify the account's type of service. If an SPN is not configured for a service, a client account will be unable to authenticate to the servers using Kerberos. You need to be a domain administrator to add an SPN, which can be added using the SetSPN utility. For Reporting Services in native mode, the following SPNs need to be registered

--SQL Server Service

SETSPN -S mssqlsvc/servername:1433 Domain\SQL

For named instances, or if the default instance is running under a different port, then the specific port number should be used.

--Reporting Services Service

SETSPN -S http/servername Domain\SSRS

SETSPN -S http/servername.domain.com Domain\SSRS

The SPN should be set for the NETBIOS name of the server and the FQDN. If you access the reports using a host header or DNS alias, then that should also be registered

SETSPN -S http/www.reports.com Domain\SSRS

--Analysis Services Service

SETSPN -S msolapsvc.3/servername Domain\SSAS

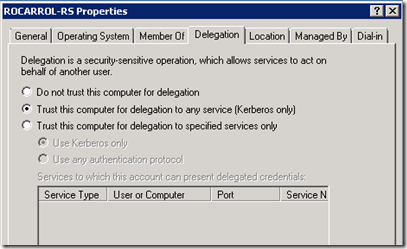

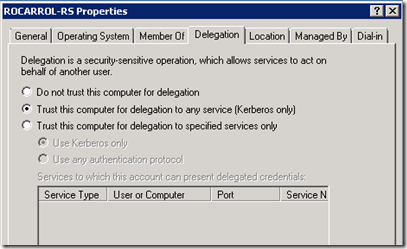

Next, you need to configure trust for delegation, which refers to enabling a computer to impersonate an authenticated user to services on another computer:

Location | Description |

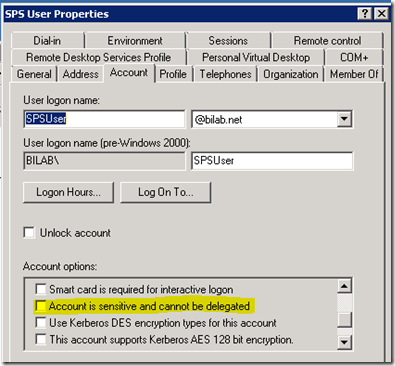

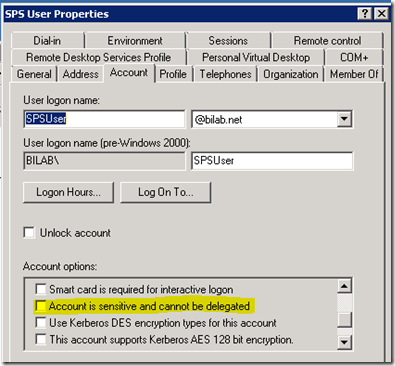

Client | 1. The requesting application must support the Kerberos authentication protocol. 2. The user account making the request must be configured on the domain controller. Confirm that the following option is not selected: Account is sensitive and cannot be delegated. |

Servers | 1. The service accounts must be trusted for delegation on the domain controller. 2. The service accounts must have SPNs registered on the domain controller. If the service account is a domain user account, the domain administrator must register the SPNs. |

In Active Directory Users and Computers, verify that the domain user accounts used to access reports have been configured for delegation (the 'Account is sensitive and cannot be delegated' option should not be selected):

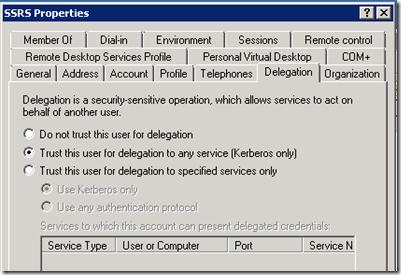

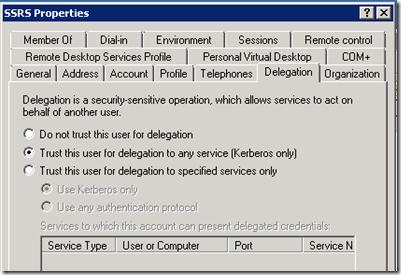

We then need to configure the Reporting Services service account and computer to use Kerberos with full delegation:

We also need to do the same for the SQL Server or Analysis Services service accounts and computers (depending on what type of data source you are connecting to in your reports).

Finally, and this is the part that sometimes gets over-looked, we need to configure the authentication type correctly for reporting services to use Kerberos authentication. This is configured in the Authentication section of the RSReportServer.config file on the report server.

<Authentication>

<AuthenticationTypes>

<RSWindowsNegotiate/>

</AuthenticationTypes>

<EnableAuthPersistence>true</EnableAuthPersistence>

</Authentication>

This will enable Kerberos authentication for Internet Explorer. For other browsers, see the link below. The report server instance must be restarted for these changes to take effect.

Once these changes have been made, all that's left to do is test to make sure Kerberos authentication is working properly by running a report from report manager that is configured to use Windows Integrated authentication (either connecting to Analysis Services or SQL Server back-end).

Resources:

Manage Kerberos Authentication Issues in a Reporting Services Environment

https://download.microsoft.com/download/B/E/1/BE1AABB3-6ED8-4C3C-AF91-448AB733B1AF/SSRSKerberos.docx

Configuring Kerberos Authentication for Microsoft SharePoint 2010 Products

https://www.microsoft.com/download/en/details.aspx?displaylang=en&id=23176

How to: Configure Windows Authentication in Reporting Services

https://msdn.microsoft.com/en-us/library/cc281253.aspx

RSReportServer Configuration File

https://msdn.microsoft.com/en-us/library/ms157273.aspx#Authentication

Planning for Browser Support

https://msdn.microsoft.com/en-us/library/ms156511.aspx

Forthcoming UK SQL User Group Meetings - Leeds, Edinburgh, London

Regional Meetings of the UK SQL Server User Group coming up in the next couple of weeks:

LEEDS AREA SQL SERVER USER GROUP: THURSDAY 29TH MAY 18:30 - 21:00 : LEEDS

https://sqlserverfaq.com/?eid=116

Martin Bell - Whats New in SQL Server 2008 T-SQL. Martin Will talk about some of the many new features in T-SQL that will be available in SQL Server

2008

Jim Brayshaw - Deploying database upgrades. Jim will talk about the key aspect to building and releasing enterprise software.

SCOTTISH AREA SQL SERVER USER GROUP: WEDNESDAY 4TH JUNE 18:30 - 21:00 :

Edinburgh

https://sqlserverfaq.com/?eid=115

Richard Fennell - Using Visual Studio Team Edition for DB Professionals.

Richard will cover the complete development life cycle using this Visual Studio Team Edition for DB Professionals, addressing issues such as schema management, source control, testing, data generation and deployment.

SQL SERVER 2008 UK USERGROUP LONDON LAUNCH EVENT: THURSDAY 19TH JUNE

https://sqlserverfaq.com?eid=114

As part of the SQL Server Launch wave we are holding a usergroup meeting to celebrate the launch (not RTM).

We're going to run this as an open session so you'll be able to ask us to cover the areas you want us to cover.

We have loads of giveaways, including Vista, training vouchers, Technet subscriptions and SQL Server licenses.

Registration is at 5.30, evening will commence at 6pm and finish 9pm.

6pm - 6.30pm

Round Table Discussion

Update on what's been going on and is going on in the SQL Server space.

Bring your SQL problems and ask the audience, bounce ideas - anything related to SQL Server.

The topics to cover in next parts could be any of the following, Simon and Jasper will hope to answer and demo the features you want to know about.

TSQL improvements, new data types, changes to CLR, spatial data, hierarchies, service broker, changes to tools, SSIS improvements, Integrated Full Text, Sparse Columns, Filtered Indexes, XQuery changes, Compression, Change Data Capture, Change Tracking, Intellisense, Table Valued Parameters, Script Task in SSIS, Performance Data Collector, Reporting services

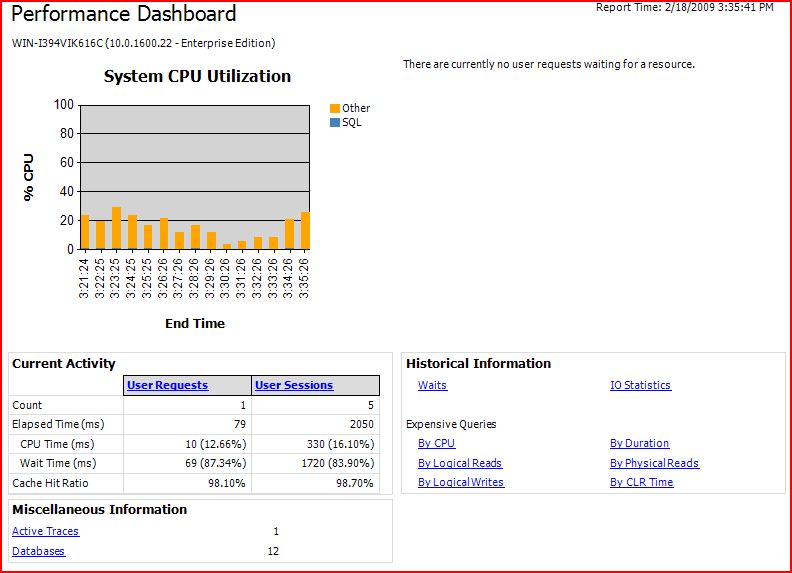

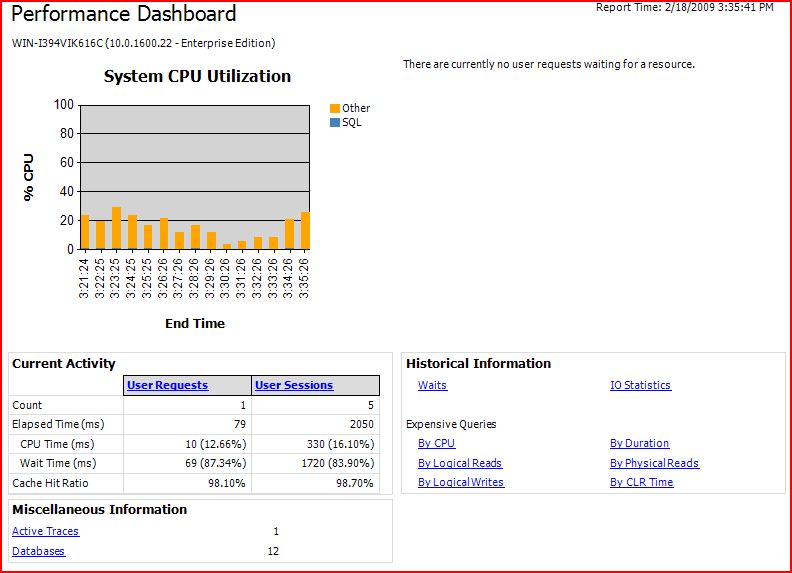

Hosting the Performance Dashboard Reports in SSRS

I blogged a while back about modifying the SQL Server 2005 Performance Dashboard reports to run on SQL Server 2008. I've since been working with several customers who use these reports for performance troubleshooting, but who would like to host them on their Reporting Services platform so they can be viewed online instead of within SQL Server Management Studio. So over the past few days I've been killing time on flights and trains doing just that. So here goes…

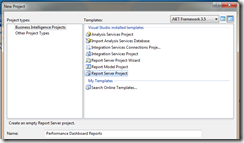

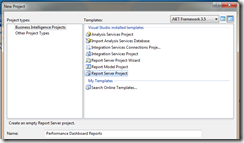

It's actually very simple to get the reports up and running on Reporting Services. All you have to do is install the Performance Dashboard reports, create a new Report Server project in BIDS (or Visual Studio) and import the .rdl files from the directory they were installed to (right-click the solution name in Solution Explorer –> Add –> Existing Item).

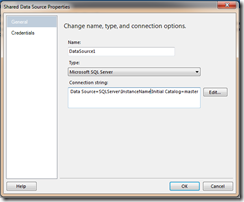

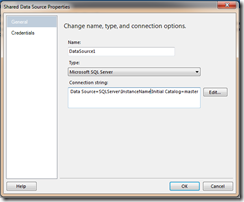

They use a shared data source, so you need to update this to point to the SQL Server instance you want to monitor (making sure that you have enabled the instance for use with the Performance Dashboard reports first), deploy the reports and data source to your Report Server and you're ready to roll.

However, this means that you can only look at one server. To monitor multiple servers, you would would need to repeat the process and host a separate copy of the reports for each one… not very scalable !

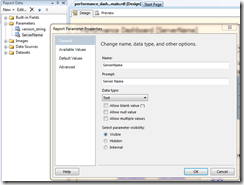

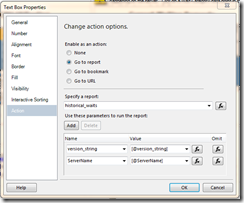

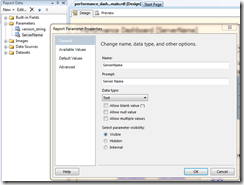

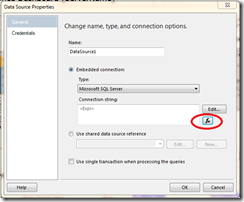

The solution I've come up with (and which you can download in the attached .zip file) requires you to publish the reports only once and use a parameter to dynamically determine which server we want to connect to in our data source. This parameter is set in the Performance_Dashboard_Main.rdl file when you first launch the report and is used as an expression in the report data source to dynamically build the connection string.

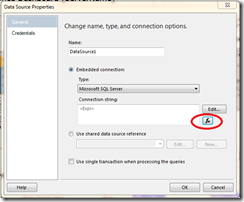

We need to modify the existing data source to be an embedded data source as using expressions in connection strings is not supported with shared data sources.

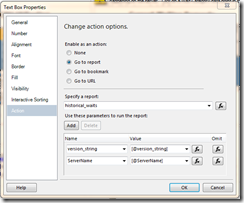

This parameter is then passed through to subsequent linked reports to build the dynamic data source connection for those reports as well.

I've created 2 versions of the Performance_Dashboard_Main report (Performance_Dashboard_Main.rdl and Performance_Dashboard_Main_CMS.rdl) which I've included in the attached solution file. The first one uses a free-text field to enter the server name and the second one uses the new Central Management Server (CMS) functionality in SQL Server 2008 to dynamically populate a drop-down list of servers you have already registered on your CMS… very cool !

These reports have been designed and tested to work on SSRS 2008, however you can use the same technique to host them on SSRS 2005 or 2008. The data source for the reports can also either point to SQL Server 2005 or 2008 instances (as long as you've followed the instructions for modifying the reports for SQL Server 2008 first). As I've mentioned before, these reports are not a replacement for the fantastic new Management Data Warehouse functionality of SQL Server 2008, but can provide another valuable tool to help DBA's analyse performance issues.

Download the reports, have a play and let me know what you think !

Performance Dashboard Reports.zip

Hyper-V RC1 Released

Hyper-V Release Candidate 1 for Windows Server 2008 was released on the 20th May. This is a full functionality release and provides improvements to security, stability, performance, user experience, forward compatibility of configurations, and the programming model. Further details and downloads available here:

https://www.microsoft.com/virtualization/ws08.mspx

It has also been announced that Microsoft have been using Hyper-V in production for several months to host the MSDN and TechNet websites:

https://blogs.technet.com/virtualization/archive/2008/05/20/msdn-and-technet-powered-by-hyper-v.aspx

Indexing Strategies

I attended a SQL Server User Group meeting earlier this year and heard a presentation about favourite DMV's. Mentioned in the discussion was sys.dm_db_index_usage_stats and that got me thinking about indexing strategies. I have been involved in performance troubleshooting databases that have used a variety of indexing stategies, ranging from none to lots of narrow indexes on practically every column ! However, there is no right and wrong indexing strategy, it depends entirely on your application and the type and frequency of queries being executed against the database.

So how do you know if you have a problem? For me, it's usually when users tell me that the application is "running slow" or they get timeouts. However, poor perfomance can be open to interpretation. There could be a whole host of factors to take into account when you are dealing with web applications, such as network connections and web servers problems. However, this shows that you need to have good benchmarks in place in order to compare performance over a period of time. Another sign could be high CPU, high memory usage or increased disk IO activity. The SQLCAT team has a post detailing the top OLTP performance issues on 2005 and some basic performance counters to monitor can be found here.

So once you've determined that there is a problem, what do you do? If you know the specific query that is causing the issue, then you can verify the query execution plan. You do this by chosing the 'Display Estimated Execution Plan' option under the 'Query' menu in Management Studio. This does not execute the query, but returns the estimated execution plan based on the statistics the server has. As a result, you need to ensure that the statistics are up to date, or you may get the wrong results. It's also a good idea to turn on statistics IO. Things to look out for in the execution plan are table or index scans and hash aggregates. Scans imply that there are no indexes for SQL Server to use or the indexes are not selective enough and SQL Server has decided it's less expensive to run a scan. Bear in mind that a clustered index scan is exactly the same as a table scan. Hash aggregates have to create a worktable i.e. a temp table in TempDB. Watch out for Hash aggregates as Statistics IO does not show the cost of the worktables, which can often be very expensive. As a rule of thumb, anytime you see "Hash" in your plan it means temp tables and this can be done better ! Another cool thing about showplan is that you can force the queries to use different indexes and run them side by side. This will show the execution plan for both queries and the cost of each relative to the batch. This is a quick way to see which index choices are most expensive.

However, on shared systems with multiple applications running against the SQL Server instance, chances are you will not know the queries that are causing the performance issues and you will need to do some digging. You can use DMV's in 2005, but as I work in a mixed environment (2000 and 2005) I prefer to use profiler to give me an idea what is going on. I run a server side trace and remotely log the results to a file as oppossed to a SQL Server table to minimise overhead on the system. I will typically run this for short periods (10 - 15 mins) just to get a feel for the queries executing against the server. This is a quick way to see if there are any expensive queries running and how frequently. This gives me some clues as to which database is causing the problems. Once I know this, I can really focus in on that database application. More information on running Profiler traces is available here.

Once I have identified the database, the hardest part is deciding which queries to index. Lots of frequently run queries can give you a far bigger overall performance gain than a large query run once a month. It is important to use Profiler and run traces over longer periods to get a feel for the mix of reads and writes. Never build an index in isolation, always consider the workload over the course of time. You can then combine these traces with DTA in order to fully analyse your workload and come up with the proper indexing strategy based on your application's specific needs. It is also worth restoring a backup of your database on a test system and use this for running your DTA analysis on. I cannot stress highly enough, do not create indexes for indexes sake. I often ask the question when interviewing, "when would droping an index actually help to increase performance"? A lot of people are conditioned to believe that you need to build lots of indexes to increase performance, but this is not true. If you have lots of inserts, updates and deletes then this is a significant overhead to keep all these indexes updated if they are not required in the first place.

Before going off and building indexes it is worth looking at alternative options. It is a good idea to update your statistics to ensure that SQL Server has most up to date information to work out the optimal execution plan. If you are working with stored procedures, you can consider recompiling them. You can also consider re-writting the code, especially if you are using cursors!

Generally, SQL Server does better with wider indexes (covering several columns) and fewer of them than it does with narrower ones. Narrow indexes will require a bookmark lookup to get the rest of the data if you don't cover the query. Bookmark lookups are expensive and SQL Server may even decide not to use the index at all and perform a table scan. In this situation, the narrow indexes will not be used and are an unecesary overhead. You can use the DMV sys.dm_db_missing_index_details to see which multi-column indexes could give better performance. Another word of warning here if you are using the 2005 DMV's, such as sys.dm_db_index_usage_stats. Remember that the data in these DMV's is not persisted across server shutdowns, or database restores. Do not assume that because the DMVs show that an index has not been used you can safely drop it. This only means that it hasn't been used since the cache was cleared out. If you are going to rely on the DMV's, you need to persist the data over a period of time and then analyse it. This is easy to do as you can select directly from the DMV's into tables, which can then persist the data. A good time period would be to persist the data every 30 mins. Paul Randal has a post here with information how to persist the index usage data from DMVs.

The final thing I'd like to say about indexing (for the time being !) is that you can't just create indexes and walk away. You need to regularly maintain them in order to reduce fragmentation. Fragmentation in indexes can be caused by inserts, updates and deletes and can be a problem if you have a busy system. By default, indexes are created with 100% fill factor, which means they are densely packed as soon as you create them. If you then need to insert rows or update data, SQL Server will need to carry out page splits in order to do so. To avoid this happening, it is advisable to create indexes with a fill factor of around 80 - 90%, however this may vary depending on the frequency of data updates. Not only will this increase the performance of inserts and updates, it will also keep fragmentation at a minimum. You can check for fragementation using DBCC SHOWCONTIG (2000) and sys.dm_db_index_physical_stats (2005) and remove fragmentation using DBCC INDEXDEFRAG or DBCC DBREINDEX in 2000, and ALTER INDEX REORGANIZE or REBUILD in 2005. There is further information and advce here for rebuilding indexes and updating statistics.

This is a huge topic and I could go on all day, but the importance of good indexing cannot be stressed highly enough ! In summary, you need to ensure that you understand your application's workload, you need to check that the indexes you have are actually being used and that you are not missing any indexes. Finally, you need to maintain your indexes so they are kept in optimal shape. Good indexing means good performance which means happy users !

[This was originally posted on https://sqlblogcasts.com/ in February 2008]

June SQL Technical Rollup Mail

The June SQL Server Technical Rollup Mail has now been released. Check it out for some great SQL Server information and resources...

https://blogs.technet.com/trm/archive/2008/06/01/june-2008-technical-rollup-mail-sql.aspx

Mirroring Multiple SQL Server Databases on a Single Instance

This is a question I get asked a lot by customers (last week being the latest) and the answer really depends on which platform you are running on. There is a support policy in Books Online that states that we only support a maximum of 10 mirrored databases per instance on 32-bit systems, however in the 64-bit world we don't have any such limitations. The limitation in 32-bit systems is due to the number of worker threads required for mirroring sessions and the fact that we are limited to a finite amount of memory for thread allocations.

However, these restrictions do not apply on 64-bit SQL Server systems and the SQLCAT team has just released a whitepaper showing that it's possible to mirror in excess of 100 databases on a single SQL Server instance !! The article also has information to help you calculate the value of max_worker_threads and max server memory appropriately.

The article can be found here and is definitely worth a read if you plan to mirror multiple databases on a 64-bit instance of SQL Server:

https://sqlcat.com/technicalnotes/archive/2010/02/10/mirroring-a-large-number-of-databases-in-a-single-sql-server-instance.aspx

Open Source Error Opens Big Security Hole

A programming error in an open source security project introduces profound vulnerabilities in millions of computer systems.

https://www.technologyreview.com/Infotech/20801/?a=f

OpsDB SQL Server Automation Tools Released

A great set of SQL Server automation tools has just been released by Microsoft onto the SQL Server Community Worldwide site...

https://sqlcommunity.com/ScriptsTools/OpsDBOperationsDatabaseforSQLServer/tabid/275/language/en-US/Default.aspx

These are excellent resources to add to your DBA toolset.

Performance Dashboard Reports for SQL Server 2008

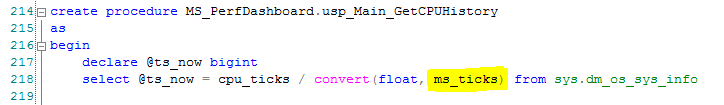

During a recent visit to Seattle for a Microsoft conference, I learned from my colleague Michael Thomassy that it's possible to run the SQL Server 2005 Performance Dashboard reports on SQL Server 2008, with a slight modification. There is a great new feature in SQL Server 2008 called Performance Data Collection, which I have blogged about in the past, and this is excellent for tracking SQL Server performance over time across your 2008 estate. There is also the excellent revamped Activity Monitor in SQL 2008. However, if you want to continue to use the Performance Dashboard reports, which many DBA's have found invaluable, they are not supported in SQL Server 2008. If you try to install the Performance Dashboard reports, you get the following error:

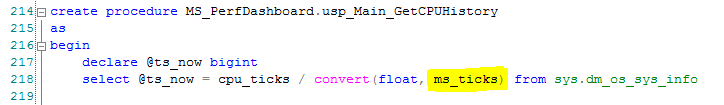

Msg 207, Level 16, State 1, Procedure usp_Main_GetCPUHistory, Line 6

Invalid column name 'cpu_ticks_in_ms'.

Msg 15151, Level 16, State 1, Line 1

Cannot find the object 'usp_Main_GetCPUHistory', because it does not exist or you do not have permission.

The reason for this is due to a change in the sys.dm_os_sys_info DMV from SQL Server 2005 to 2008 (the cpu_ticks_in_ms column has been removed in 2008 https://msdn.microsoft.com/en-us/library/ms175048.aspx). Download and install the performance dashboard reports as normal (but save the files in the Program Files\Microsoft SQL Server\100\Tools\PerformanceDashboard directory) and then modify the setup.sql file as shown below before running it against your SQL Server 2008 instance.

Please note that SQL Server 2008 has introduced new wait types that the Performance Dashboard reports currently don't handle. I would strongly recommend using the new Management Data Warehouse reports in SQL Server 2008 in order to get the best user experience. However, this workaround will help you get the Performance Dashboard Reports up and running on your SQL Server 2008 instances (see the screenshot below).

Scottish Area SQL Server User Group

I'm happy to announce that the next Scottish SQL Server User Group will be hosted at Microsoft Edinburgh on the evening of the 13th November. Full details of the agenda and how to register can be found on the UK SQL Server User Group site here:

https://sqlserverfaq.com/events/144/Scottish-Area-SQL-Server-User-Group-Jammin-SQL-Server-2008.aspx

The theme of the meeting is "Jammin' with SQL Server 2008", so I'm looking forward to some freestyle SQL Server 2008 demos and discussions !

Popular posts from this blog

[Excel] 문서에 오류가 있는지 확인하는 방법 Excel 문서를 편집하는 도중에 "셀 서식이 너무 많습니다." 메시지가 나오면서 서식을 더 이상 추가할 수 없거나, 문서의 크기가 예상보다 너무 클 때 , 특정 이름이 이미 있다는 메시지가 나오면서 '이름 충돌' 메시지가 계속 나올 때 가 있을 것입니다. 문서에 오류가 있는지 확인하는 방법에 대해서 설명합니다. ※ 문서를 수정하기 전에 수정 과정에서 데이터가 손실될 가능성이 있으므로 백업 본을 하나 만들어 놓습니다. 현상 및 원인 "셀 서식이 너무 많습니다." Excel의 Workbook은 97-2003 버전의 경우 약 4,000개 2007 버전의 경우 약 64,000개 의 서로 다른 셀 서식 조합을 가질 수 있습니다. 셀 서식 조합이라는 것은 글꼴 서식(예- 글꼴 종류, 크기, 기울임, 굵은 글꼴, 밑줄 등)이나 괘선(괘선의 위치, 색상 등), 무늬나 음영, 표시 형식, 맞춤, 셀 보호 등 을 포함합니다. Excel 2007에서는 1,024개의 전역 글꼴 종류를 사용할 수 있고 통합 문서당 512개까지 사용할 수 있습니다. 따라서 셀 서식 조합의 개수 제한을 초과한 경우에는 "셀 서식이 너무 많습니다." 메시지가 발생하는 것입니다. 그러나 대부분의 경우, 사용자가 직접 넣은 서식으로 개수 제한을 초과하는 경우는 드뭅니다. 셀 서식이 개수 제한을 넘도록 자동으로 서식을 추가해 주는 Laroux나 Pldt 같은 매크로 바이러스 에 감염이 되었거나, 매크로 바이러스에 감염이 되었던 문서의 시트를 [시트 이동/복사]하여 가져온 경우 시트의 서식, 스타일이 옮겨와 문제가 될 수 있습니다. "셀 서식이 너무 많습니다." 메시지가 발생하지 않도록 하기 위한 예방법 글꼴(종류, 크기, 색, 굵기, 기울임, 밑줄), 셀 채우기 색, 행 높이, 열 너비, 테두리(선 종류, ...

ASP.NET AJAX RC 1 is here! Download now

Moving on with WebParticles 1 Deploying to the _app_bin folder This post adds to Tony Rabun's post "WebParticles: Developing and Using Web User Controls WebParts in Microsoft Office SharePoint Server 2007" . In the original post, the web part DLLs are deployed in the GAC. During the development period, this could become a bit of a pain as you will be doing numerous compile, deploy then test cycles. Putting the DLLs in the _app_bin folder of the SharePoint web application makes things a bit easier. Make sure the web part class that load the user control has the GUID attribute and the constructor sets the export mode to all. Figure 1 - The web part class 2. Add the AllowPartiallyTrustedCallers Attribute to the AssemblyInfo.cs file of the web part project and all other DLL projects it is referencing. Figure 2 - Marking the assembly with AllowPartiallyTrustedCallers attribute 3. Copy all the DLLs from the bin folder of the web part...

Architecture Testing Guide Released

视频教程和截图:Windows8.1 Update 1 [原文发表地址] : Video Tutorial and Screenshots: Windows 8.1 Update 1 [原文发表时间] : 4/3/2014 我有一个私人的MSDN账户,所以我第一时间下载安装了Windows8.1 Update,在未来的几周内他将会慢慢的被公诸于世。 这会是最终的版本吗?它只是一项显著的改进而已。我在用X1碳触摸屏的笔记本电脑,虽然他有一个触摸屏,但我经常用的却是鼠标和键盘。在Store应用程序(全屏)和桌面程序之间来回切换让我感到很惬意,但总是会有一点瑕疵。你正在跨越两个世界。我想要生活在统一的世界,而这个Windows的更新以统一的度量方式将他们二者合并到一起,这就意味着当我使用我的电脑的时候会非常流畅。 我刚刚公开了一个全新的5分钟长YouTube视频,它可以带你参观一下一些新功能。 https://www.youtube.com/watch?feature=player_embedded&v=BcW8wu0Qnew#t=0 在你升级完成之后,你会立刻注意到Windows Store-一个全屏的应用程序,请注意它是固定在你的桌面的任务栏上。现在你也可以把任何的应用程序固定到你的任务栏上。 甚至更好,你可以右键关闭它们,就像以前一样: 像Xbox Music这种使用媒体控件的Windows Store应用程序也能获得类似于任务栏按钮内嵌媒体控件的任务栏功能增强。在这里,当我在桌面的时候,我可以控制Windows Store里面的音乐。当你按音量键的时候,通用音乐的控件也会弹出来。 现在开始界面上会有一个电源按钮和搜索键 如果你用鼠标右键单击一个固定的磁片形图标(或按Shift+F10),你将会看到熟悉的菜单,通过菜单你可以改变大小,固定到任务栏等等。 还添加了一些不错的功能和微妙变化,这对经常出差的我来说非常棒。我现在可以管理我已知的Wi-Fi网络了,这在Win7里面是被去掉了或是隐藏了,以至于我曾经写了一个实用的 管理无线网络程序 。好了,现在它又可用了。 你可以将鼠标移至Windows Store应用程序的顶部,一个小标题栏会出现。单击标题栏的左边,然后你就可以...

Comments

Post a Comment